From UCLA 29/11/23

FINDINGS

An experimental computing system, modeled after the biological brain, achieved a 93.4% accuracy in identifying handwritten numbers.

This success is attributed to a novel training algorithm providing real-time feedback during the learning process.

This algorithm surpassed the accuracy of traditional machine-learning methods, which train after processing batches of data, achieving only 91.4% accuracy.

The research highlighted that the system’s memory of past inputs, stored within itself, enhanced its learning capabilities.

In contrast, conventional computing approaches rely on separate software or hardware for memory storage, distinct from the processor.

BACKGROUND

For 15 years, researchers at the California NanoSystems Institute at UCLA (CNSI) have been working on a new computational technology.

This brain-inspired system consists of a network of nanoscale wires containing silver, situated on electrodes.

The system operates by receiving and outputting data through electrical pulses.

Unlike conventional computers with fixed atomic structures in their memory and processing modules, this nanowire network physically adapts to stimuli.

The network’s memory is based on its atomic structure and is distributed throughout the system, similar to synaptic connections in a biological brain.

Collaborators at the University of Sydney developed a specialized algorithm to manage input and output, leveraging the system’s dynamic and parallel data processing abilities.

METHOD

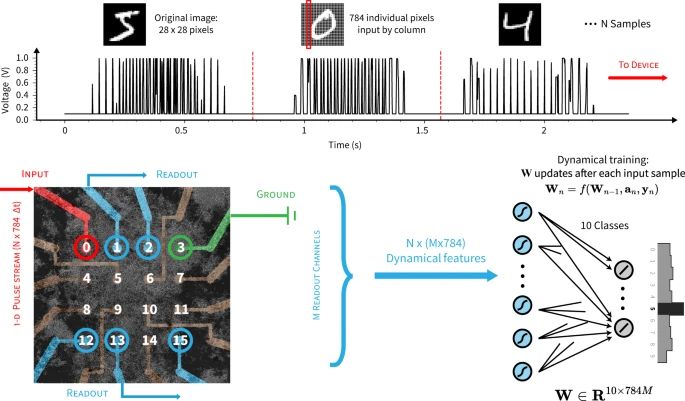

The system was built with a material comprising silver and selenium, forming a network of entangled nanowires above 16 electrodes.

Researchers trained and tested the network using images of handwritten numbers from a standard dataset.

The images were communicated to the system pixel-by-pixel via electrical pulses, with varying voltages indicating different pixel shades.

IMPACT

This developing nanowire network is anticipated to be more energy-efficient than current silicon-based AI systems for similar tasks.

It shows potential in processing complex, time-varying data like weather or traffic patterns, which current AI finds challenging without massive data and energy resources.

The study’s co-design approach—simultaneous hardware and software development—suggests nanowire networks could complement traditional electronic devices.

With its brain-like memory and adaptive learning capabilities, the network could excel in “edge computing,” processing complex data locally without relying on distant servers.

Applications could span robotics, autonomous navigation in vehicles and drones, Internet of Things technology, health monitoring, and coordinating multi-location sensor data.

References:

Bodybuilding enhancers

References:

https://www.giveawayoftheday.com/forums/profile/1740555

References:

Concho casino

References:

https://squareblogs.net/studyquartz3/sicher-and-schnell

References:

Over the counter steroids pills

References:

https://www.franziskabronz.ch/Alltagsperlen/index.php/;focus=HSTPTP_com_cm4all_wdn_Flatpress_10637595&frame=HSTPTP_com_cm4all_wdn_Flatpress_10637595?x=entry:entry251117-163537;comments:1

References:

Body beast supplements alternatives

References:

https://goondepot.com/@madisoncoventr?page=about

References:

Corticosteroids drugs names

References:

https://git.mis24.ru/grazynaburne98/grazyna2009/wiki/Inhaled-Steroids%3A-Uses%2C-Side-Effects%2C-Benefits-%26-Cost

References:

Steroidal.com

References:

https://zomi.watch/@hiramhunt52459?page=about

References:

Physical effects of steriods

References:

http://pasarinko.zeroweb.kr/bbs/board.php?bo_table=notice&wr_id=9746311

Nicely put, Appreciate it!

References:

http://bioimagingcore.be/q2a/user/turnbridge8

References:

Instant Casino Bonus ohne Einzahlung

References:

https://justbookmark.win/story.php?title=online-casino-mit-paypal-sicher-schnell-einzahlen-2026

References:

Instant Casino Auszahlungsdauer

References:

https://techniknews.top/item/597999

References:

Instant Casino anmelden

References:

https://f1news.space/item/592925

References:

Gewinne bei Instant Casino auszahlen

References:

https://pads.jeito.nl/s/18V_tZbaDb

References:

Make money online australia

References:

https://david-tyler.technetbloggers.de/the-best-online-casino-in-australia-2025-1775055621

References:

Health benefits of steroids

References:

https://hedgedoc.eclair.ec-lyon.fr/s/J4pVBZlYC

References:

Supplement stacks that work

References:

https://p.mobile9.com/palmsteel0/

legal steroids for sale uk

References:

https://orchidlip51.bravejournal.net/the-legalities-of-purchasing-testosterone-without-a-prescription

References:

Online casino echtes geld gewinnen

References:

https://mapleprimes.com/users/sonmint3

References:

Video poker machines

References:

http://techou.jp/index.php?grippillow2

References:

Spin casino

References:

https://fravito.fr/user/profile/2240752

References:

Artificial testosterone

References:

https://adamsen-walton-2.mdwrite.net/top-praparate-fur-muskelaufbau

References:

Echtgeld Casino App mit Auszahlung

References:

https://telegra.ph/Casino-Spiele-mit-Echtgeld-spielen-04-15

References:

Isleta casino

References:

https://casino-fara-depunere.online-spielhallen.de/

References:

G casino thanet

References:

https://graph.org/Fair-Go-Casino-App-Download-Australia-04-27

References:

Udbetalingsprocenter

References:

https://tea.neuron.my/kelleboldt4479

References:

Bedste casinoer med høj udbetaling

References:

http://alfa-power.de/member.php?action=profile&uid=20058

This solved something I was stuck on.

well, i opened this tab and almost closed it, but didn’t. it just made sense while reading, and that made it easier to follow. i may come back again.

It connected a few things I didn’t fully understand before. I didn’t expect to stay this long.

References:

Casino helsinki https://fresh-jobs.in/employer/microgaming-no-deposit-bonuses-may-2026/

References:

Wizard of oz slot machine https://www.fepp.org.ec/lavadabethea9

References:

Casinos las vegas http://classified.ondemandbiz.com/profile/wolfgangbay146

References:

Casino yellowhead https://www.cbl.aero/employer/new-microgaming-bonus-codes-for-may-2026/

References:

Slot games no download https://bwjobs4graduates.online/companies/mega-moolah-slot-play-mega-moolah-online-today/

References:

Europa casino mobile https://jobs.askpyramid.com/companies/thunderstruck-2-slot-review-free-demo-2026/

References:

Roulette probability https://srsbkn.eu.org/young20z25098